AI cheating has become the defining headache of modern education. Students use ChatGPT to write essays. They use Wolfram Alpha to solve maths problems. They use any number of tools to generate work that looks like theirs but isn't. And teachers are exhausted.

The standard response has been detection: plagiarism checkers, AI writing detectors, proctoring software. But detection is a losing battle. Every new detection tool spawns new evasion techniques. It's an arms race with no end in sight.

What if there's a better approach? What if, instead of trying to catch AI-generated work, we designed assessment in ways that make AI irrelevant?

That's exactly what I'll be discussing at Pearson's Immersive Practitioners' Community Webinar on Thursday, 26th February 2026. If you're a UK educator interested in how immersive technology can transform assessment, I'd love for you to join us.

Event Details

- Date: Thursday, 26th February 2026

- Time: 3:30pm to 5:00pm (UK)

- Format: Online webinar with Q&A

- Audience: UK educators across schools, colleges, and higher education

- Cost: Free

What We'll Cover

The webinar brings together Pearson's latest research on GenAI and assessment with practical insights from WhimsyLabs on implementing VR-based assessment in real classrooms. The session includes:

- Pearson's GenAI research: What the data tells us about AI's impact on assessment validity

- Best practice for VR assessment: How to design and implement assessment in immersive environments

- Open discussion: Share experiences and challenges with fellow educators

Why Process-Driven Assessment Changes Everything

Traditional assessment asks: "What did the student produce?" This creates a fundamental problem in the AI age. If you're only looking at the final output, you can't distinguish between a student who understands the material and one who prompted ChatGPT effectively.

Process-driven assessment asks a different question: "What did the student actually do?"

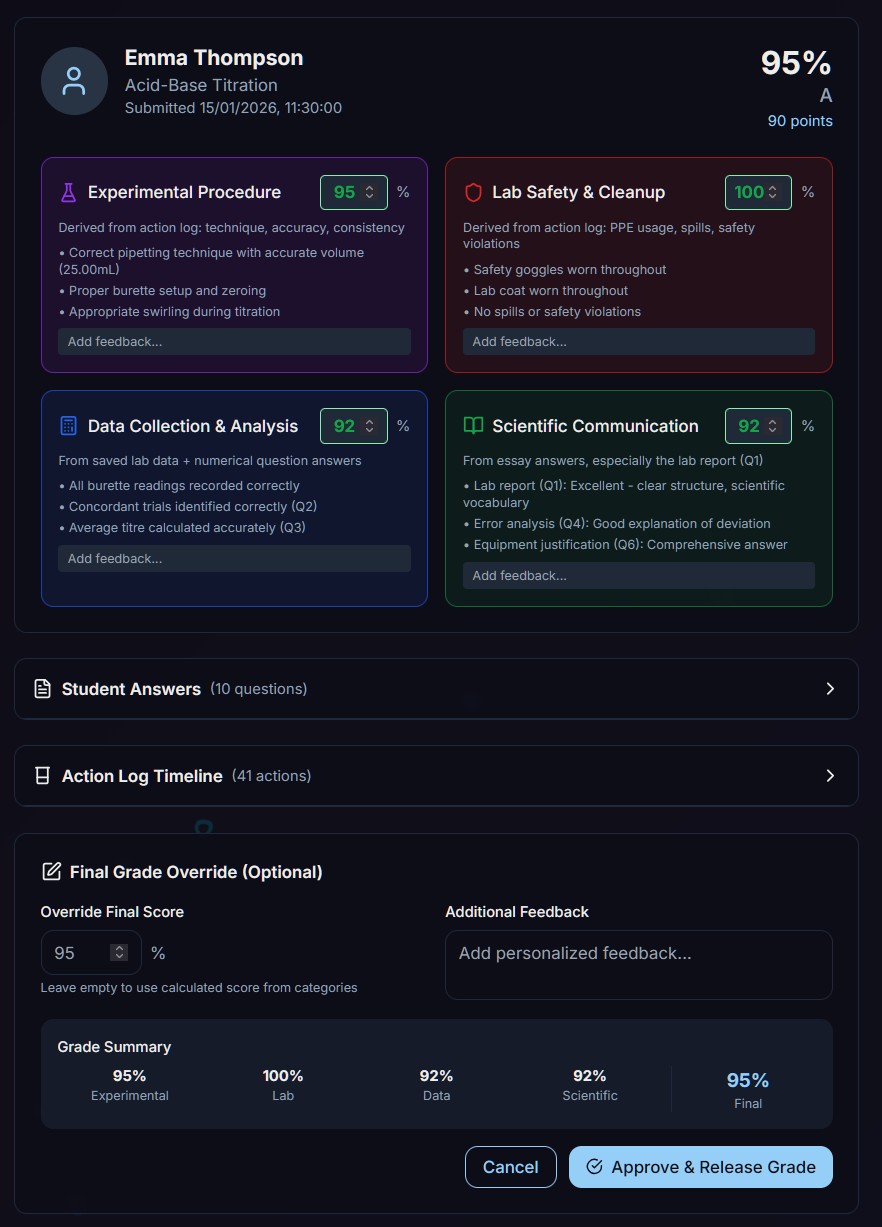

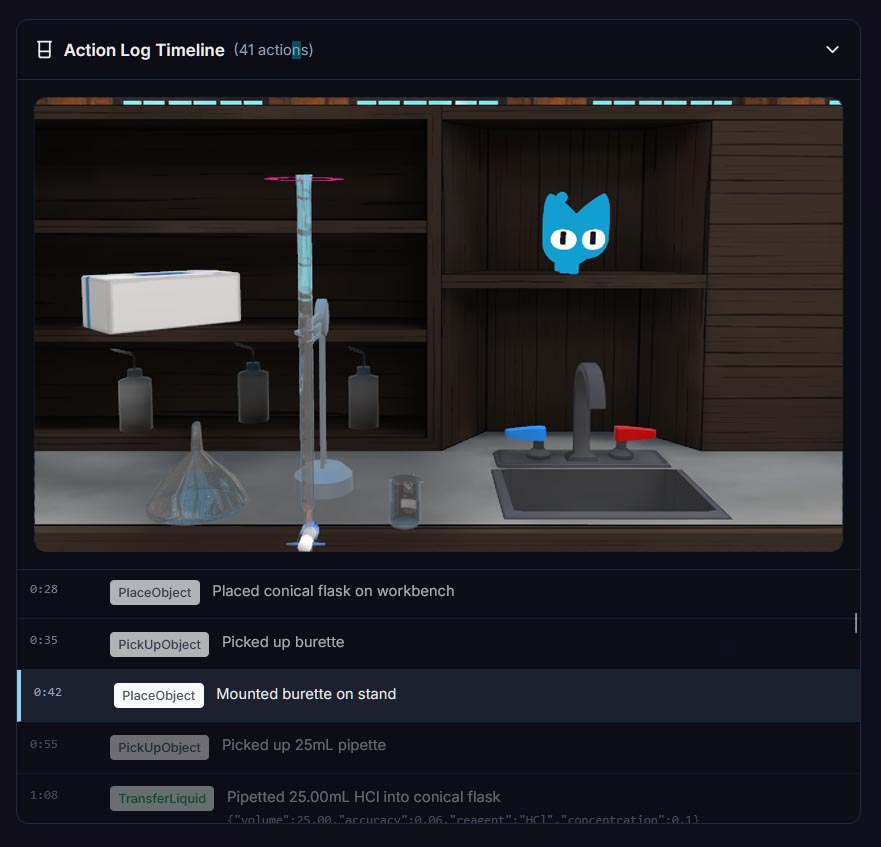

In a virtual laboratory environment, we can answer that question with precision. When a student performs a titration in WhimsyLabs, we capture everything: Did they rinse the burette before filling it? Did they add the indicator to the conical flask? Did they approach the endpoint slowly, adding drops one at a time? Did they record their readings accurately?

This isn't about surveillance. It's about capturing the skills that actually matter in science education. A student who can recite the steps of a titration hasn't demonstrated competence. A student who can perform a titration properly, with appropriate technique and attention to precision, has demonstrated real practical ability.

The Research Behind Process-Driven Assessment

This isn't just theory. A recent paper in Frontiers in Education (Alkouk & Khlaif, 2024) explored AI-resistant assessments in higher education. The key insight: when you track student actions rather than just final outputs, you create assessment that is naturally robust against AI assistance.

Why? Because AI can write about titrations. AI can describe the steps. AI can even generate realistic-looking data tables. But AI cannot perform a titration. It cannot demonstrate proper technique. It cannot show the procedural knowledge that comes from practice.

When assessment focuses on process, the question of "did they use AI?" becomes less relevant. What matters is: can they do the thing?

Making AI Irrelevant, Not Invisible

Let me be clear: the goal isn't to ban AI from education. AI tools are here to stay, and students should learn to use them effectively. The goal is to design assessment that evaluates what we actually care about.

In science education, we care about practical competence. We want students who can handle equipment safely. We want students who understand why each step of a procedure matters. We want students who can troubleshoot when things go wrong.

Virtual labs, with their complete process visibility, let us assess exactly these skills. Not instead of traditional assessment, but as a complement to it. Written work still has its place. But practical skills deserve assessment that actually captures practical ability.

What This Looks Like in Practice

At the webinar, I'll share concrete examples of process-driven assessment in action:

- Technique scoring: How WhimsyLabs' AI evaluates practical technique in real-time

- Competency tracking: Measuring improvement over multiple attempts, not just final performance

- Error analysis: Understanding where students struggle and why

- Teacher dashboards: Giving educators visibility into practical skill development

I'll also discuss the challenges we've encountered and how we've addressed them. Process-driven assessment isn't a magic solution. It requires thoughtful implementation and clear communication with students about what's being assessed and why.

Who Should Attend

This webinar is designed for UK educators who are:

- Concerned about AI's impact on assessment validity

- Curious about immersive technology in education

- Looking for practical approaches to authentic assessment

- Teaching science subjects at any level

- Involved in curriculum design or assessment policy

You don't need any prior experience with VR or immersive technology. The session is designed to be accessible to educators at all levels of technical familiarity.

Join the Conversation

The best part of these webinars is the discussion. Pearson's Immersive Practitioners' Community brings together educators from across the UK who are thinking seriously about how technology can serve learning. The Q&A section is always rich with insights and practical questions.

I'm looking forward to hearing what challenges you're facing and what solutions you've found. Assessment in the AI age is a problem we're all navigating together, and the best ideas often come from practitioners in the field.

Register Now

The webinar is free to attend, but registration is required. Spaces may be limited, so I'd encourage you to sign up early if you're interested.

I hope to see you there. And if you can't make it but want to learn more about process-driven assessment in virtual labs, feel free to get in touch directly. We're always happy to discuss how WhimsyLabs can support authentic assessment in your school or institution.

References

- Alkouk, W.A., & Khlaif, Z.N. (2024). AI-resistant assessments in higher education: practical insights from faculty training workshops. Frontiers in Education, 9, 1499495. https://doi.org/10.3389/feduc.2024.1499495

- Pearson (2025). Assessment Evolved: Redefining Formative Assessment in a Generative AI Era. Pearson Insights